In his recent essay, Dario Amodei described AI as being in a turbulent “adolescence” phase: powerful, fast-moving, and unpredictable without the right constraints.

In customer support, that unpredictability shows up as inaccurate responses or hallucinations, inconsistent outcomes, and automation that deflects instead of resolves.

When you're dealing with customer queries, account details, payments, personal data, etc., unreliable or incorrect AI isn’t just a bad interaction or experience - it’s a trust problem.

That's why 2026 is the year of AI guardrails.

Not because AI models aren’t getting smarter, but because reliability, customer value, and performance matter more than hype.

In this article, we’ll explore the importance of AI guardrails in the context of customer service and contact centres.

We’ll cover:

- What AI guardrails are, and why they're crucial in customer support

- The most common AI pitfalls guardrails prevent in customer interactions

- A guardrails stack for reliable AI customer service

- How to ensure your AI guardrails are working

TL;DR:

Guardrails are the controls and processes that keep customer service AI accurate and safe. They prevent the most common AI pitfalls including hallucinations, inconsistent responses, and automation that deflects instead of resolves.

To deliver reliable AI support and interactions, you need a guardrails stack that covers:

- Knowledge guardrails: Ground AI answers in approved sources, use a “look up, then answer” approach, and return “unsure” when information isn’t available.

- Behaviour guardrails: Use prompts and clear boundaries to keep the AI on-brand, on-policy, and within scope.

- Escalation guardrails: Keep humans in the loop with warm, seamless handovers, especially for low confidence or high-risk topics.

- Quality guardrails: Test before launch, then monitor and optimise using transcripts, analytics, and regular performance reviews.

- Security & compliance guardrails: Protect sensitive data with strong security controls and support compliance requirements such as CCPA, GDPR, and PCI DSS or HIPAA where relevant.

Why guardrails are essential in customer service AI

In customer support, every conversation is packed with information and context.

From order history and account details to payment queries, personal customer data, troubleshooting, policies that cannot be bent, and more.

Customers aren't just looking for a friendly answer. They're looking for the right answer and a swift resolution.

That's why an incorrect or misleading response is not just a minor bug.

If AI confidently misstates a returns policy, gives inaccurate product/service information, or mishandles a sensitive account question, the impact shows up fast.

Customers get frustrated, repeat contacts increase, queues grow, and the business takes on unnecessary risk.

Even when the information provided is technically correct, AI automation that deflects rather than resolves still erodes trust because it wastes customer time.

In 2026, the bar for customer service AI is simple: accuracy, consistency, customer value, and measurable outcomes matter more than flashy capability.

The most impressive demo is moot if the system cannot perform reliably in production.

Guardrails are what make reliability possible by keeping AI grounded in approved information, aligned with your processes, and accountable to the metrics that matter for customer experience.

What are AI guardrails?

In customer service, AI guardrails are the controls and processes that keep automated support reliable and safe.

They prevent an AI assistant from giving inaccurate or inconsistent information, trying to handle issues it shouldn’t, or blocking a customer’s path to resolution.

Put simply, guardrails ensure your AI behaves like a well-trained team member, adding value to the customer experience and helping rather than hindering.

This goes beyond eliminating hallucinations. Good guardrails keep AI working within clear boundaries:

- On-policy: Following your company’s approved rules, processes, and escalation paths.

- On-truth: Using verified sources of correct, up-to-date information.

- On-track: Moving the interaction forward toward resolution, not deflection.

- Escalating appropriately: Handing over to a human quickly and smoothly when needed (e.g., when it cannot answer confidently or when the topic is high risk).

Ultimately, the goal isn’t just avoiding wrong answers.

It’s about making sure your AI delivers high-quality service and consistent outcomes at scale, while protecting customer trust and meeting the requirements your team operates under every day.

The key pitfalls AI guardrails prevent in CX

When AI customer service goes wrong, it usually fails in a few predictable ways.

The challenge is that these issues don't just create poor experiences. They also negatively impact efficiency and brand loyalty, with a knock-on effect on revenue.

This is why guardrails aren't just “anti-hallucination” controls. They’re about performance as much as prevention.

Below are the three most common pitfalls AI guardrails are designed to prevent in customer interactions.

1. Hallucinations & incorrect information

Hallucinations or “confidently wrong” answers are one of the most talked-about AI risks.

Without guardrails, AI may fabricate details about your business or policies that sound plausible.

For example, a product that doesn't exist, a delivery window it cannot verify, or a refund promise an agent cannot honour.

In customer support, even a small inaccuracy can create bigger downstream problems, because the customer takes that answer as a commitment.

2. Inconsistent responses

Even if the AI is generally accurate, inconsistency can still be problematic.

The same question might get different answers depending on how it’s phrased, which channel the customer uses, or what context the AI picks up from the conversation.

This can leave customers confused and makes it harder for teams to standardise service quality and achieve consistent outcomes.

3. Low-value automation

Sometimes the AI isn’t technically wrong, it's just not useful.

In these cases, it might provide a generic answer, ask the customer to repeat information, or keep going round in circles without offering progression towards resolution.

This is where automation becomes deflection. It reduces costs on paper, but it doesn't reduce effort for the customer.

In fact, it often drives repeat contacts which actually increase costs and inefficiency.

Overall, guardrails make AI a valuable asset to businesses and customers by ensuring answers are accurate, outcomes are consistent, and automation focuses on resolution, not deflection.

The Guardrails Stack for reliable AI customer service

If you want your AI to consistently perform optimally and safely, you need more than one protocol.

You need a stack of guardrails in place that all work together.

In this section, we’ll break down the key components of a guardrails stack for AI customer service and why each one matters.

1. Knowledge guardrails (grounding)

The most effective way to reduce hallucinations or inaccuracies is to limit what the AI can draw from in the first place.

In customer service, answers that are guesses, fabrications, or even vague aren't acceptable. Your AI responses need to be clear, factual, and grounded in approved sources of truth.

That’s why knowledge guardrails focus on:

- Using company-approved information, such as your help centre content, support articles, policy documents, product documentation, webpages, FAQs, etc.

- Keeping responses grounded in your company knowledge (i.e. ensuring the AI only uses approved content or data when answering customer queries, no outside sources or other world knowledge the model might have).

- Prioritising a “look up, then answer” approach over “generate an answer”, ensuring the AI retrieves the relevant knowledge/information from an approved source.

This is where establishing a comprehensive, company-approved knowledge base for your AI is essential.

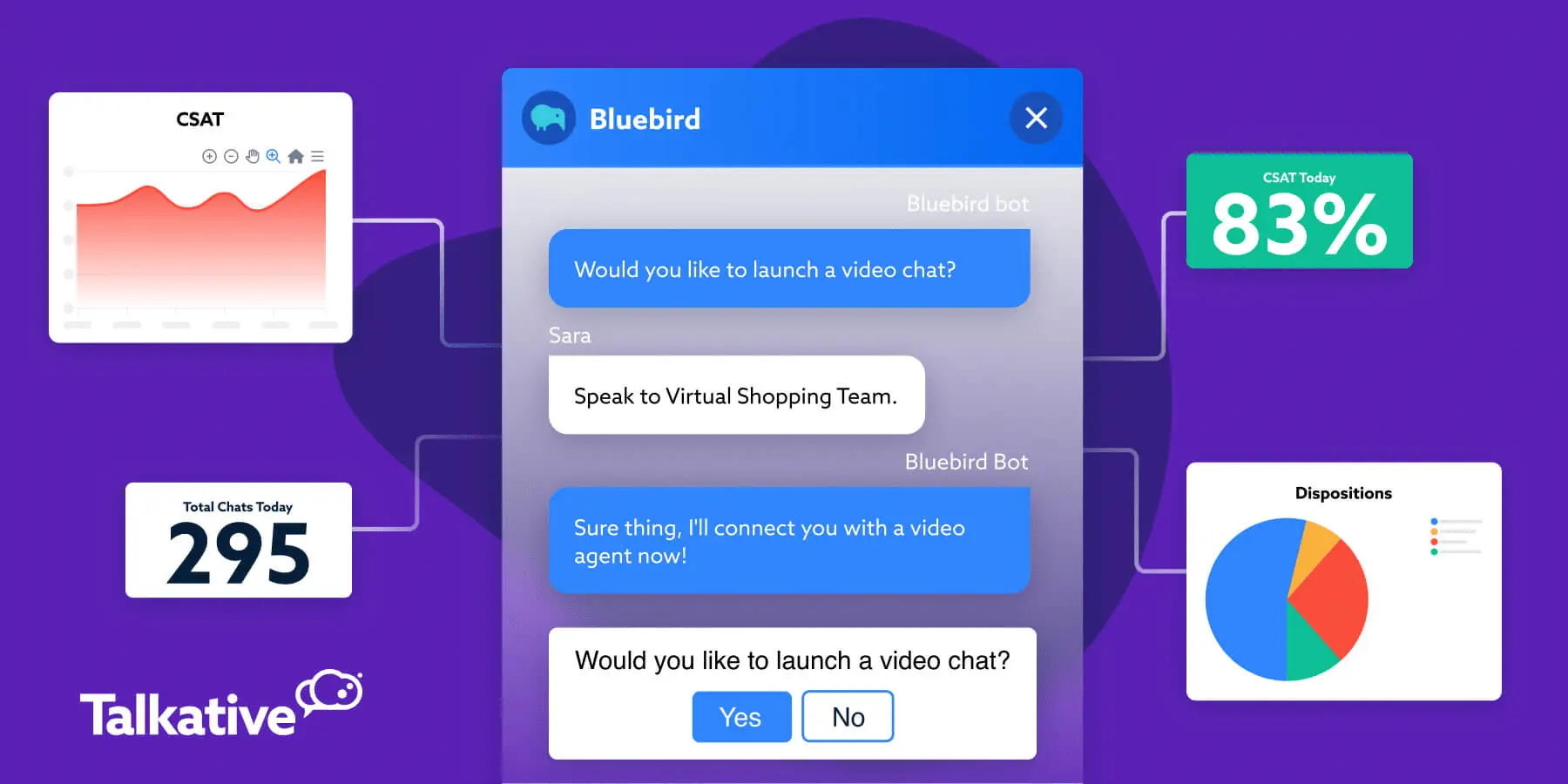

With Talkative, you can build private knowledge bases using a combination of data sources. This includes your website content, SharePoint documents, various file types (e.g. PDF, CSV, TXT, JSON), and free text.

You can also securely integrate Talkative with other systems, such as your CRM or order management platform, allowing the AI to pull specific information from them when needed.

If the answer to a customer's question can’t be found in your knowledge base or connected systems, the AI will return an “unsure” response and escalate to a human, rather than attempt to fill in the gaps.

2. Behaviour guardrails (what the AI is allowed to do)

Even with strong knowledge grounding, you still need to define the AI's role and what “good” looks like in a customer interaction.

Behaviour guardrails set the rules for what the AI can and can’t do, and how it should communicate.

They typically include:

- Clear boundaries for what the AI can answer, what it must refuse, and what it must escalate.

- Tone and brand controls, so responses stay consistent across channels and teams.

- Policy and compliance constraints, so the AI doesn’t make promises it can’t keep or bend rules in the moment.

In practice, these guardrails are often enforced through AI prompts.

Prompts are essentially instructions that tell an AI agent what its role is and how it should respond to customer queries or requests.

They also shape the AI’s tone of voice, response style, and allowed behaviours, ensuring your AI stays aligned with your brand, use cases, and workflows, not just the model’s default behaviour.

On the Talkative platform, AI prompts are written and easily configured using a prompt builder, allowing support teams to update and test them without needing advanced technical skills.

.webp)

3. Escalation guardrails (when the AI should handover to human agents)

No matter how advanced an AI agent is, there will always be interactions that need a human.

Some queries are complex, some are sensitive, and some simply require judgment, empathy, or discretion that customers expect from a person.

It’s critical that your AI can recognise these moments and escalate to a live agent at the right time.

Escalation guardrails keep risky or high-impact conversations from being handled automatically, and they protect the customer experience when uncertainty is high.

They also keep humans in the loop, which reduces the chance of errors, helps teams handle edge cases safely, and ensures customers feel supported when stakes or emotions are higher.

Common escalation rules include:

- Low confidence: The AI asks clarifying questions when it’s unsure, then hands over to a human agent if needed.

- High-risk intents: The AI initiates an automatic handoff for risky or emotionally charged topics (e.g. payment disputes, complaints, cancellations, or legal queries).

- Continuity: The AI provides full context and any captured details during the transfer, so agents start the conversation prepared, and customers don’t have to repeat themselves.

That last point matters more than many teams realise. A cold transfer forces the customer to start over, increasing frustration and extending handle time.

A warm handover, where the agent receives the conversation context and key details upfront, makes the experience feel cohesive and facilitates faster resolutions.

That's why Talkative supports seamless, warm handoffs to human agents when the AI can’t resolve a query autonomously, ensuring the interaction stays smooth and focused on resolution.

4. Quality guardrails (evaluation + monitoring)

AI customer service isn't a “set and forget” solution.

Customer journeys change, policies update, and brands evolve. Quality guardrails make sure your AI performs optimally while remaining up-to-date, accurate, and useful over time.

AI guardrails for quality typically involve:

- Testing your use cases before go-live, using both common questions and edge cases.

- Tracking and monitoring performance post-launch, using key metrics, interaction transcripts, and AI analytics and reporting.

- Reviewing performance regularly, then refining your prompts, knowledge base, and workflows based on what you’re seeing.

Continuous quality monitoring and performance management are essential because AI outcomes can drift over time.

Any change in your policies, product catalogue, or customer needs can quickly lead to more “unsure” responses and escalations.

Quality guardrails help you catch issues early, identify gaps in your prompt or knowledge base, protect customer trust, and keep automation driving resolution.

5. Security, privacy, & compliance guardrails

For customer service AI to be fully reliable and trustworthy, it must be safe, secure, and compliant with relevant regulations.

Any customer interaction can involve sensitive or personal information, which makes data security and AI compliance non-negotiable.

Strong guardrails here include:

- Data security and privacy features, such as end-to-end encryption, access controls, audit logs, retention controls, and automatic masking for sensitive data

- Support for regulatory requirements, designed to support compliance needs like CCPA, UK GDPR, and the EU AI Act, plus sector frameworks such as PCI DSS for payment processing or HIPAA in healthcare

- Operational controls, including documented processes, auditing, clear customer disclosures where needed, and human escalation for high-risk topics.

Talkative’s approach includes end-to-end encryption during processing, automatic masking of sensitive data such as card details, and a strict policy of not using customer data to train third-party AI models.

Our users can also control where customer information is processed and stored to support data sovereignty requirements.

For resilience, Talkative also includes disaster recovery processes, real-time monitoring, and regular penetration testing to help identify and address vulnerabilities proactively.

How to know your guardrails are working (metrics that matter)

When guardrails are effective, they improve your AI performance and outcomes.

The easiest way to confirm this is to measure and track the key performance indicators (KPIs) that matter most in customer service interactions.

Start with the checklist below, then refine it based on your goals and use cases.

- Accuracy rate: Are AI responses correct and based on approved information? This can be measured through QA sampling of conversations, knowledge base testing/reporting, and spot checks on high-volume queries.

- Containment quality: If the AI “contains” a conversation, did it do so in a way that actually helped the customer? Look at whether contained bot interactions end in a successful outcome, not just whether they avoided a handover.

- Resolution rate: Did the customer actually resolve their issue, or did they still need to contact you again? Resolution is often the clearest indicator of whether automation is adding value.

- Time to resolution: How quickly does the customer reach an outcome, whether that’s an AI-only resolution or a resolution after handover? This helps you spot experiences that feel slow or repetitive, even if they eventually succeed.

- First contact resolution (FCR): Are customers able to resolve their issues in a single interaction? If FCR drops, it’s often a sign of deflection, inconsistency, or missing knowledge.

- Average handling time (AHT): Is AI reducing the time it takes to resolve queries, either by speeding up self-service or supporting agents after handover?

- Escalation quality: Did the AI hand over at the right time, and did the agent receive enough context for a smooth transfer? Track both the timing of escalations and whether transfers reduce repeat questions and handle time.

- Repeat contact rate: Are customers returning with the same issue after interacting with the AI? A rising repeat rate often signals deflection, inconsistent answers, or gaps in the knowledge base.

- CX outcomes: Are your core CX metrics improving, such as CSAT, customer retention, wait times, and conversion, where relevant?

Guardrails are the protocols and processes that keep AI performance on track. These metrics are how you prove it’s working.

The takeaway

2026 is the year of AI guardrails, because reliable, trustworthy AI is what customers and brands actually need.

As AI continues to evolve, the CX teams that win will be those who operationalise and prioritise reliability.

That means implementing guardrails that keep AI accurate, consistent, and safe in real customer conversations, then proving and optimising performance over time.

In short, guardrails are becoming a differentiator.

They’re what turn AI from an impressive demo into brand-safe automation that earns trust and delivers measurable value in the real world.

If you’re exploring AI for your contact centre or customer service strategy, Talkative can help you put the right guardrails in place.

Reach out to our experts for advice and guidance, or book a demo to see reliable customer service AI in action.